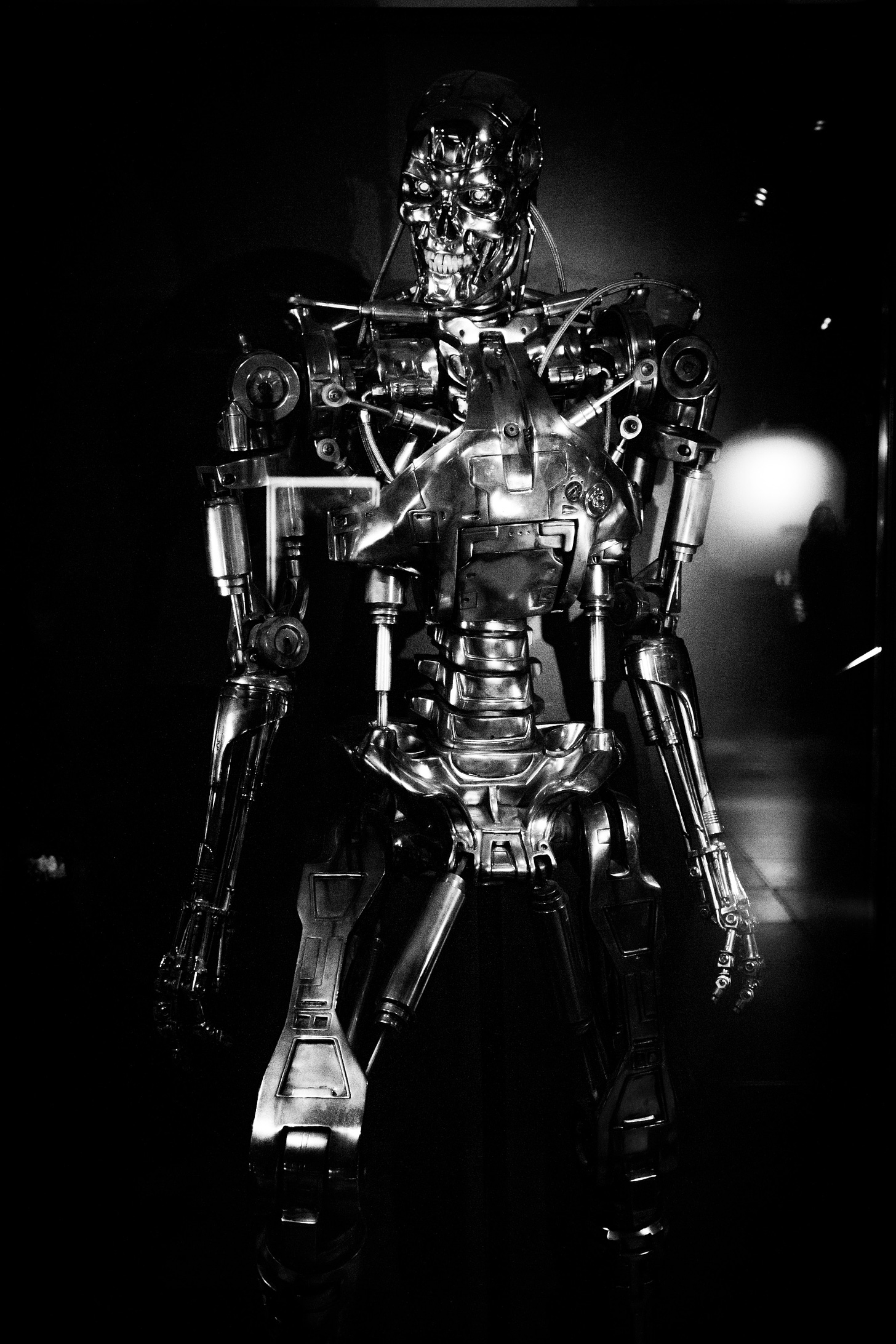

With summer just a few weeks away, the lists of summer movies are starting to appear. Entertainment talk lately has turned to whether or not James Cameron (The Terminator, Avatar, Titanic et al) will make yet another Terminator movie and what does this have to do with tech? Read on.

The movie, if you will recall, is about a future where sentient robots have taken over the world. Their mission: to eradicate humanity. It was science fiction, but how often do these films turn out to be prescient? (How a movie predicted Ohio's toxic derailment)

We can also tell you on fairly good authority that another Terminator movie is in the serious discussion stages, especially since we seem to be at least close to Skynet going live - the last part of The Terminator tech trifecta.

Close? Let’s do the math…

In case you missed it, OpenAI CEO Signs Letter Warning AI Could Cause Human “Extinction” MASSIVE COGNITIVE DISSONANCE INCOMING. “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war,” said Sam Altman who, out of the other side of his mouth, is doing more to promote LLMs than anyone. The letter, for the record, was signed by OpenAI co-founders Sam Altman and Ilya Sutskever, Harvard legal scholar Laurence Tribe, and Google DeepMind CEO Demis Hassabis (among others).

LLM, or Generative AIs are increasingly discussed as being a threat to humanity, and thank you so much for raising the red flag, Sam, but before you released your AI into the wild, did you think to have a kill switch? Or some tech that could somehow put the genie back into the bottle? Didn’t think so. Monday morning quarterbacking is all well and good, but that never works. Or did you not consider the bigger picture?

And what exactly is the bigger picture, considering that this just in: OH GREAT, THEY PUT CHATGPT INTO A BOSTON DYNAMICS ROBOT DOG “As if robotic dogs weren't creepy enough,” said Futurism of the pup, brought to you by the same good people who bring you killer robots, and “In February, Microsoft, which signed a multi-billion dollar deal with OpenAI, released a paper outlining design principles for ChatGPT integration into robotics.” What to speak of the fact that ChatGPT can now control Boston Dynamics robots. Is it time to panic?, Boston asked.

Shelly Palmer wrote an interesting piece: AI: The Unseen Threat and the AGI Smoke Screen, cataloguing “ten ways current narrow-focused, application-specific AI can weaponize data with the potential to do serious harm to individuals, companies, and even governments. (The list could be much longer.) None of these use cases require super intelligence or AGI. All are possible today with existing readily available tools.” He made good points – but missed the forest through the trees, re the true smoke screen.

Which brings us back to The Terminator.

According to DNYUZ, by way of The New York Times, “Microsoft Says New A.I. Shows Signs of Human Reasoning,” and all that seems to be missing as we barrel at top speeds towards a potentially very dystopian future, is Skynet, linking the AI-enabled robots et al globally.

Unless you consider the fact that SpaceX's Starlink Now Has Over 4,000 Satellites in Orbit, as PCMag reported. “As capacity for the first-gen Starlink constellation reaches its limit, SpaceX also signals that it's preparing numerous launches for the second-generation network.” As of January, SpaceX rival OneWeb, reported “542 satellites now in orbit…has more than 80% of its first-generation constellation launched,” so there is already a constellation of LEO (Low Earth Orbit) satellites blanketing the planet with high-speed low latency connectivity - and radiation, of course. Very soon – if we haven’t reached that point already - every inch of earth will be connected on the smart grid.

Sentient robots, too.

There are even reports that 5g is a directed energy weapon system.

Can the AI cabal self-regulate? Considering what they’ve done to our privacy with their massive surveillance – wasn’t Facebook allowed to self-regulate and we see what happened there (The Facebook Oversight Board: An Experiment in Self-Regulation). And self-regulate, on which front?

It’s all connected, and tech isn’t exactly known for long-term thinking or consequences of the products they release. Move fast and break things is not the direction to go – and never was – especially in a world where people are immediately seduced by whatever shiny new thing that comes along, believing that the technology is there to make life easier, which was the big promise after all, with no consideration at all for the long-term consequences.

A Terminator reboot may well be critical at this juncture, and hopefully people will forgive the bad films that came out of the franchise following Terminator 2. Time to wake up and it may well be the thing to help wake people up or let’s face it, it otherwise it may be a case of hasta la vista, baby as we go onward and forward.

I am yet to be convinced that AI will become anti-human. That is on it's own. Certainly possible, but I believe highly unlikely.

The issue is that AI is not "on it's own." It has human influence all over and in it, unintentionally and intentionally.

For example, all the LLM training sets are human created (mostly), human determined, and human filtered. AI has not been part of evolution evolving on its own over millennia in Earth's environment. It's built by humans from human created algorithms that later branch off and are superseded by ML, in a microcosm.

Humans are still in control of AI models and give it commands.

So in the end, it's the combination of AI and humans that is the massive concern. When Sam Altman or any insider speaks about AI dangers, they not only know this all too well, they know who and how controlling access is being apportioned.

Until I see otherwise, I'm willing to give AI benefit of the doubt, but not humans involved in decision making around it.

Musk - yeah a total untrustworthy hypocrite. Human. (I think?)